News

“Do you sense that you live in a world that literally makes no fucking sense? A world that’s made to make you feel like you’re going, like, batshit crazy. A world whose fucked up kink is to continually gaslight you? Welcome to Jankspace, Babes.”

This is how Daniel Felstead and Jenn Leung’s 2025 film, Welcome to Jankspace, Babes, opens: with a 3D-rendered Julia Fox, lacquered in high-definition Unreal Engine gloss, purring this diagnosis with her signature vocal fry. This onboarding sequence is Fox’s beckoning into the world of Jankspace, where the feeling of being psychologically (and physically) destabilised is, like, literally the point.

In 2001, architect Rem Koolhaas coined junkspace to describe the residue of modernity’s bureaucratic architecture. It is the leftover interiors of airports, malls, atriums and escalator wells that existed as infrastructural excess, “what remains after modernisation has run its course.” Felstead and Leung’s jankspace is the digital-age riff of this former space. It names the grotesque negative space that the technocapitalism-stooped digital network operates on, our slimy overheating human bodies. Take a seat. Tune in. Let your brain rot. “It’s the experience of frantically pressing your clammy finger on the smashed screen of your phone that is about to die, as you desperately try to find your power cord so that you can touch ID access your keychain, to get the PIN from the OTP text message, to verify your identity, to access your work email, which has the tracking number for the ‘Ohio Sigma Rizzler’ slogan t-shirt you bought on Shein a fortnight ago,” a dismembered splayed out Julia Fox narrates. It’s the feeling of existing in a world that increasingly feels like it was not designed for the human body to actually exist in. The body becomes the fleshy interference to design around rather than for. So relatable.

There is something disarming about Fox bestowing her wisdom on the viewer this way, not just because the messenger is an it-girl rendered into a goth high-gloss avatar, but because the format is already doing what it’s describing. The jittery ElevenLabs.io cadence, the overstimulated montage, the too-muchness of the interface. In jankspace, content is not a vessel for information (and to be fair, it hasn’t been for a while), it’s merely the foam on the surface of a system that can’t stop vomiting up its insides. And that’s where the slop comes in, both as a symptom and a raison d’etre of this theoretical framework of networked culture.

There isn’t much left to say about slop, specifically AI slop (which every mention of slop in this piece refers to), that hasn’t already been memed, litigated, or doom-scrolled to death. Even the non-chronically online can identify what slop looks like, or the general visual aesthetic of slop. The spiritual off-ness of it all. Slop has become so ubiquitous that Merriam-Webster crowned it the 2025 Word of the Year, defining it as “digital content of low quality that is produced usually in quantity by means of artificial intelligence.” 2025 being the year of slop makes sense. This was the year OpenAI’s Sora let users puppet American influencer Jake Paul into hyperrealistic beauty tutorials. The year Google’s Nano Banana triggered panic when people realised they could no longer reliably tell a real selfie from an AI generated one. And then there is American media personality Charlie Kirk – always Charlie Kirk – whose posthumous AI-assisted existence refuses to log off. The “We Are Charlie Kirk” anthem alone spawned enough remixes to qualify as a minor genre. The Brazilian Phonk remix packs a particular punch.

This is precisely where and why Felstead and Leung’s framework comes in handy. In a digital landscape that’s not just messy but structurally janky, knowing how to spot slop is almost irrelevant. Congratulations, you can identify the waxy cheekbones or the six-fingered hands (which will probably not be the case in a few months, or by the time this is published in print). Now what? The hyperrealism discourse is a red herring. Whether you can tell that the AI influencer you’ve been thirst-following for a year is, in fact, a hallucination with a ring light is not the (most) urgent question.

“ What I’m interested in is the value of content within these platform dynamics. It is a sort of development of [meme content], but optimised in a certain way. It removes this need for meaning, like an attempt to almost create a kind of drug through a kind of visual or aesthetic. It’s like trying to just create a kind of affect in you, a kind of bodily response, which then would trigger you liking it, disliking it, commenting on it, and then circulating it,” Felstead tells ICON MENA. “What I was interested in was this idea of [slop] as a template… It gets rid of the excess fat of meaning. It’s kind of optimising itself on a formal and structural level. So the aesthetics of this era are a byproduct of this optimisation.”

What looms over this optimisation fantasy is something that has been formally diagnosed as well: enshittification. A term popularised by tech critic Cory Doctorow, and very often called upon in slop discourse, enshittification names the lifecycle of digital platforms under late-stage capitalism. First, platforms are good to users in order to attract them. Then, they’re good to advertisers to monetise those users. Finally, they screw both in order to extract maximum value for shareholders. Slop is the logical endpoint of this enshittified swamp. When platforms optimise purely for engagement and extraction, meaning is simply inefficient, and if anything, a liability that makes the whole consumption process slower. Enshittification is the infrastructural condition that makes jankspace possible, a network so over-leveraged and incentive-warped that it prioritises circulation over everything else, at the cost of everything else. The foam keeps churning because the churn is the point. The presence of the content is the point itself, you don’t have to read into it too much.

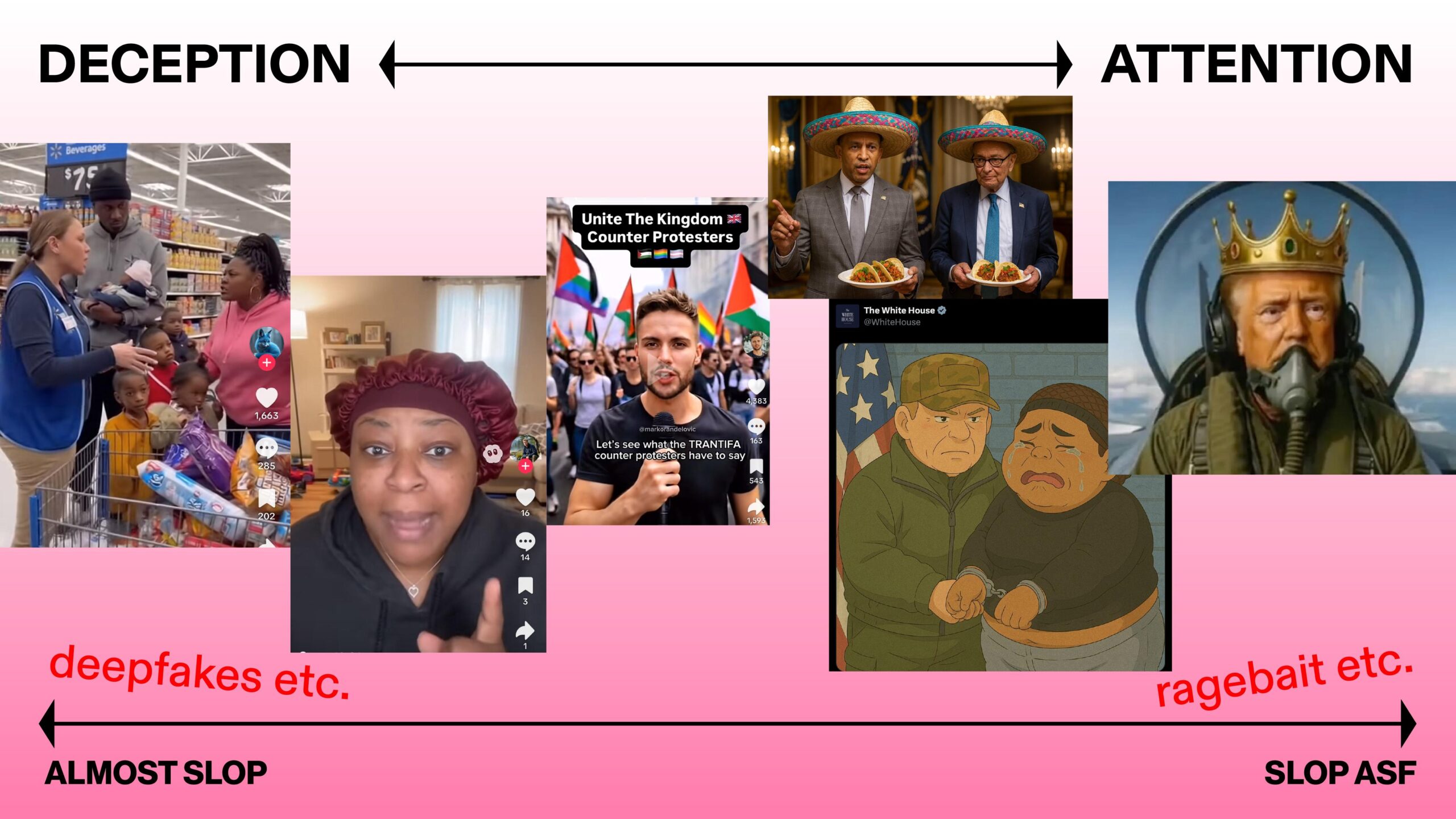

In this digital ecosystem, or, dare I say, in this post-semiotic terrain, where signs are hollowed out to their most bare-bones form, thinking of slop as a template helps put things in perspective. A template is mutable and malleable, it is not a single thing, which the now catch-all term has come to insinuate. In a recent panel talk at Ibraaz London convened by writer and thinker Shumon Basar, featuring designer and thinker Al Hassan Elwan (POSTPOSTPOST) and internet folklorist Günseli Yalcinkaya, Elwan pushes this idea further, presenting a slop continuum. At one end, you have the almost-convincing deepfake, the slightly cursed supermarket altercation, and generally content that, at the surface, still wants to pass as real. At the other, things start breaking down into the absurd. You have AI kings with gamer headsets, cartoon dictators, and general ragebait so concentrated it does earn the label of slop, goop and all. This presents a clean transition from deception, content trying to fake its reality, to attention, content that presents itself as “obviously AI” that still gnaws at your attention span anyway. It’s the type of content that jolts you hard enough to tap, type, seethe, share, etc. It’s that shift from “almost slop” to “slop asf” that debowels the content, exposing its true function.

According to Elwan, we are already in the slop slop era. There is so much slop, so much discourse about slop, that we have kind of moved beyond slop as something that exists. But another very important distinction here. “Brain rot” was 2024’s Oxford word of the year. It’s important to distinguish between the two. For Elwan, the difference is not about quality or even absurdity, but about whether there is still an interactive human element involved, a semiotic operation infused into the imagery that gives the signs meaning. Brain rot, as he sees it, is messy, excessive, sometimes unbearable, but it is participatory, with people inventing characters, building micro-mythologies, storylines, and so on. It produces lore. It produces codes. It requires a collective agreement to enter the bit and stay there for whatever kick one would get. Here, one can think about the Italian brain rot universe of 2024, where Tralalelo Tralala and Ballerina Cappuccina reigned supreme. For the unfamiliar, there’s a whole Italian brain rot wiki to guide you through it. Slop, by contrast, does not require you to enter anything. It does not accumulate history. It does not care if you are there. It operates on you, not with you. It is the AI-generated shark in Nike shoes that flashes across your screen and disappears. It is the video of the cartoon egg explaining how to cook itself. It is content that exists only to circulate, to fill, to keep the feed in motion. Brain rot implies a kind of communal hallucination, slop implies automation, solely the hallucination and machination of the machine. One suggests an internet that is still being co-authored, however deranged; the other suggests an internet that is simply being inhabited. And for Elwan and many other internet thinkers, that is the real distinguishing factor. Not taste. Not aesthetics. But whether we are still building worlds together, or whether we have become little more than extensions of the machine that scrolls them past us. “ Collective lore building is how memes work. It’s very human-centric. On the other end you have slop, where you basically end up being a data center flesh extension… like a battery for the tech oligarchy,” he explains.

And so, slop works. Not accidentally. Not ironically. It works because it is perfectly fitted to an enshittified platform ecology that has killed meaning. It is the ideal template for engagement, retention, watch time, and every other KPI the user is measured against. None of this is necessarily breaking news. You give attention, the Zuck sells you ads. Our focus is monetised by default, hence attention economy discourse. The horse is kind of dead by now. But in this slopscape, a paradigm shift of sorts is helpful in attaching some meaning to the slop. Swap “attention economy” for “distraction economy,” and the inner workings unfurl. Distraction is more profitable than attention because it is endless. Attention implies duration, it is a finite resource. Distraction implies fragmentation and quantity. The latter scales better, it comes at a much cheaper cost so it is perfect for optimisation. A distracted user, their dopamine circuits deep-fried at this point, scrolls compulsively. Slop thrives here because it is built for interruption. It doesn’t ask to be understood. On a visual level, it barely asks to be seen, but we see (or watch) it anyway. So for all the talk about a dead internet, slop is not the decay of said internet. It is a refinement process, the machine working exactly as it is intended to in the good year of 2026. You don’t need to hold attention when you can manufacture distraction at scale.

“Social media performs an alchemical transformation of attention into distraction. Susan Sontag once defined the intellectual as “someone who pays attention to the world.” We now pay distraction to the world… Slop is the finely engineered content fit for the finely distracted mind,” Shumon Basar explains. “Distraction is a political tool par excellence, too: how to keep up with the litany of crimes and misdemeanors across Earth when the story of this orphan kitten who grows up to be a chaddified Michelin starred sushi chef is using up all of my brain’s processing power? Dopamine is the handmaiden to distraction, and slop is a pharmacological weapon imbibed through our fingers.”

To echo Basar’s sentiment, once you accept that distraction is the engine fuel that keeps the machine locked in and your doomscroll endless, the rest of the picture auto-portraits itself out. Slop competes with everything else on the feed, in fact it is even pushed for by the algorithm. In short, the reason why we should “care” about interrogating slop is because it is, on many levels, a tool of immense power.

In February of last year, American President Donald Trump set the internet ablaze when he posted AI-generated slop of a Gaza reimagined as a luxury riviera. Much of his social media output since has been slop or slop-adjacent. Less than a year later, those same fever-dream renderings were presented as policy at the 2026 World Economic Forum, where he unveiled a futurist reconstruction vision for Gaza, reviving “Middle East Riviera” fantasies complete with luxury waterfront developments and a US-backed “Board of Peace” tasked with overseeing its implementation. It now becomes understandable why media scholars like Roland Meyer have described generative AI output as the aesthetic of digital fascism, Walter Benjamin’s thesis on political spectacle amplified for the platform era. It normalises the spectacle, the ragebait that makes its way onto your IG Stories faster than literally anything else. And at the risk of making all of this sound too US-centric as kind of a backhanded America joke, that same sloppy aesthetic has made its way elsewhere, to far-reaching but closer places. MLGANOW (Make Lebanon Great Again Now) is a YouTube channel launched in March 2025 and aggressively pushed, advertised, and forced onto Lebanese social media feeds. The page hosts a steady stream of AI-generated videos in which the US-backed sitting President Joseph Aoun and Prime Minister Nawaf Salam address the Lebanese people directly. The content is, by all means, vapid, and is revealing of overall popular Lebanese politics discourse. A textual analysis of the content merits a thesis of its own. So, for all intents and purposes, this is a case study of slop as a portable, cheap, ready-to-make vehicle for propaganda, soft power, banal nationalism, ragebait, etc.

But, AI slop also functions as an order higher than mere saturation, besides overwhelming the feed and distracting the viewer per se. This automation of perception through AI-generated content, and slop specifically, pushes us fully into Paul Virilio territory, into what the French theorist calls logistics of perception, the organisation of sight. Or rather the weaponisation of it.

For the philosopher (who coined the term “dromology” as the philosophical study of speed), speed is power because of the way it reorganises vision. This is under the premise that the speed of transmission determines what can be seen and when, making perception anything but a neutral affair. To see faster is to pre-empt. The history of imaging, from high-speed photography to aerial reconnaissance to computerised targeting, and now Generative AI, is a history forged in war. Britain, after the First World War, invested in the logistics of perception: propaganda films, detection systems, transmission equipment, etc. Control the field of sight, control the field of action. In The Vision Machine (1994), Virilio warns that we are entering an era of automated perception, machines transcend from simply recording events to interpreting them – they “see and foresee in our place,” producing “rational illusions.” Vision is industrialised. Virilio’s theorisation on the logistics and automation of perception at the cusp of the 21st century is almost a given at this point when we think of imaging technology, but this automation logic aligns itself perfectly with how AI slop, at the Donald Trump level, is made to function and produce “meaning.” It is the logical logistical continuation of a visual order that is now about pre-emption and simulation. The image stages reality in advance. First as AI mock-ups. Then as viral posts. Then as godawful sloppy graphics at summits. The slop image precedes the decision and prepares responses. Virilio writes that if seeing is already a kind of foreseeing, then it’s no wonder that forecasting as an industry was on the rise at the time of writing. The “type” of slop here exploits that to the nth degree. It saturates the nervous system at a speed that neutralises reflection time. One could argue that this, to a degree, is the expansion of Virilio’s theory. The military-industrial complex industrialised vision. Platform capitalism has democratised it. “Slop asf,” to borrow from Elwan, is what happens when the vision machine manufactures the perceptual conditions in which the world will be accepted. This is the paradigm shift, manufacturing content becomes a pre-conditioning of catastrophe, or simply the future.

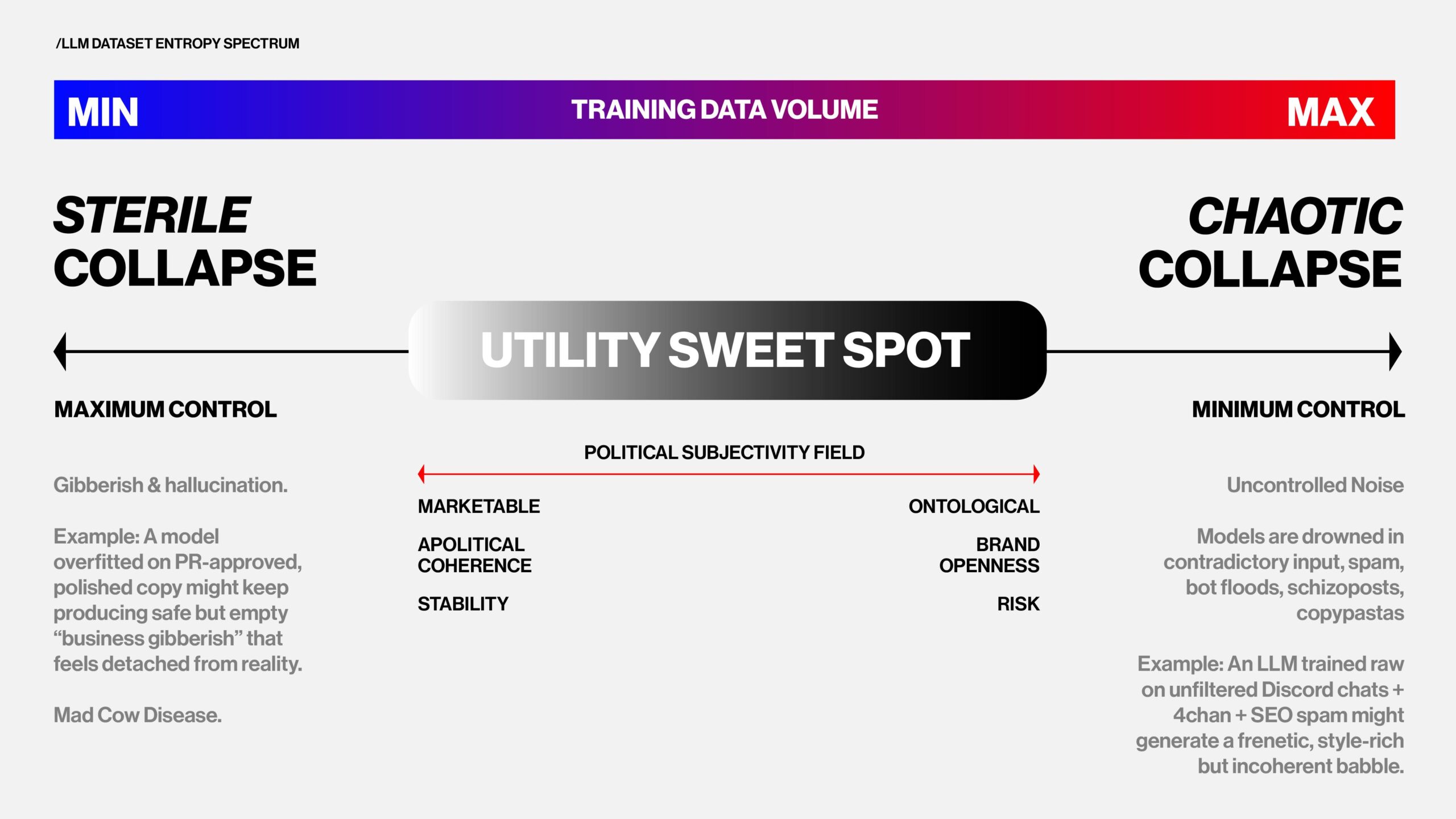

So, given the doom of it all, for which this is absolutely not personal advocacy for, and in the spirit of looking through the goop, examining the machinations that make slop possible is an interesting thought experiment. The training data for generative AI models, in general, are black boxes, much like the inner workings of the social media algorithm itself. The data that Large Language Models (LLMs) like ChatGPT are trained on fall strictly in between one end of the spectrum where data is completely sterile and “unhuman-like,” and another end that is probably too human, as in loaded with symbolic meaning, as in data noise like schizoposts and Discord chats and incoherent human interaction. So, an LLM output is just human enough, with enough em dashes to read convincingly. Because if the system is trained on the human archive in all its mess, violence, irony, and excess, it will metabolise and mirror it back to us in the ugliest of ways.

Take Elon Musk’s xAI chatbot and incel savior/oracle Grok, which has infamously complied with user requests to generate Nazi propaganda and digitally undress women. It only takes so many “@grok, is this real” prompts to have the chatbot praising Adolf Hitler. This reveals something deeper about how AI digests the human archive. If an AI system is trained on output that itself is generated by other AI systems, or on sprawling datasets full of “junk text,” it begins to suffer from a kind of autophagy, consuming derivatives of derivatives until errors, hallucinations, and incoherencies stack up. Turns out, an LLM can get brain rot by consuming brain rot. When the underlying training material is saturated with slop, the system’s generative capacities degrade accordingly.

Hence the critical point: these systems, practically, cannot hallucinate anything outside of the human archive. AI generation is, by default, a backward looking operation. AI models cannot understand symbolic meanings, they understand patterns. And as such, they generate patterns. They do not understand metaphors or cultural codes. That limitation, the fact that AI’s so-called creativity is derivative by design, is an opening. It gives us a leverage point to insist on regulation, accountability, and scrutiny. It gives us a way to refuse slop the seriousness it craves, and to engage (or not engage) with it accordingly. Maybe even sabotage it, but that’s a discussion for another time.

This brings the conversation back to more uneasy territory: us, the users. Our interaction with the internet, and technology in general, has always treaded the fine line between anxiety and euphoria. As Basar describes it for himself: “Anxiety is the ambient condition of a world where everything solid has melted into slop. When it comes to why digital euphoria is twinned with anxiety, it’s because even though we have read the health studies that say Instagram is as bad as 15 cigarettes a day, and despite the hideous biases embedded in the algorithms as designed by owners whose fealty is towards neocolonial power, here I am, at 3:04 AM, unwilling to close the app. Knowing you’re in an abusive relationship — that may well be funding genocides you also denounce on the same app — and not leaving to me is part of this specific anxiety, as though I’m watching the downfall of civilisation in slow motion while playing a part in it.” Or in simpler terms, one could imagine Unreal Engine Julia Fox saying: “Like, seriously, just get off that fucking phone.” But, until we find a way to actually put down the phone, we might as well just rot our brains, but critically, meticulously, and selectively. Enjoy.